One of the elements of training neural networks that I’ve never fully understood is transfer learning: the idea of training a model on one problem, but using that knowledge to solve a different but related problem. I’m aware of the general idea – that it should be possible to reuse knowledge from one problem space on a different but related problem space – but the idea that a small amount of training on a small dataset can meaningfully improve the performance of a neural network is still strange to me.

That said, regardless of how strange it seems to me, from my experiments this weekend, it is clearly extremely effective.

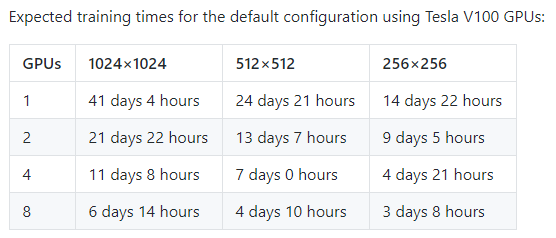

After investing hundreds of hours into training StyleGAN models from scratch, I followed the advice of a fellow StyleGAN aficianado on Twitter, and invested instead in starting from the existing models and simply training on a new set of models. What I found absolutely amazed me: with a single training ‘step’ – feeding only 40,000 total images into a network for training – the model had immediately learned tons of specifics about how dining rooms, living rooms, and kitchens worked.

This image shows a side-by-side of four randomly selected rooms produced by the kitchens model before and after the first round of training: It starts out with the bedrooms model, and transforms to a kitchen model after a small amount of training.

On the left: Bedrooms: on the right: kitchens.

On the left: Bedrooms: on the right: kitchens.

I’m no expert in neural networks: I don’t have any specific experience that informs me how these things work. But the ability for the model to learn almost immediately how to create images that reresented different classes seems to suggest to me that a large amount of the structure in these models is representing features that are the same between different types of rooms.

Now, again speaking as a layman: This makes sense. Many of the features of rooms are the same, of course! Walls, windows, combinations of colors: all of these things are fundamental properties of images of all rooms. And many of the other aspects that we see in rooms have a lot of similarities: from a picture, a kitchen table is just a slightly taller living room table; a couch is just a shorter, lower bed, covered in similar patterns.

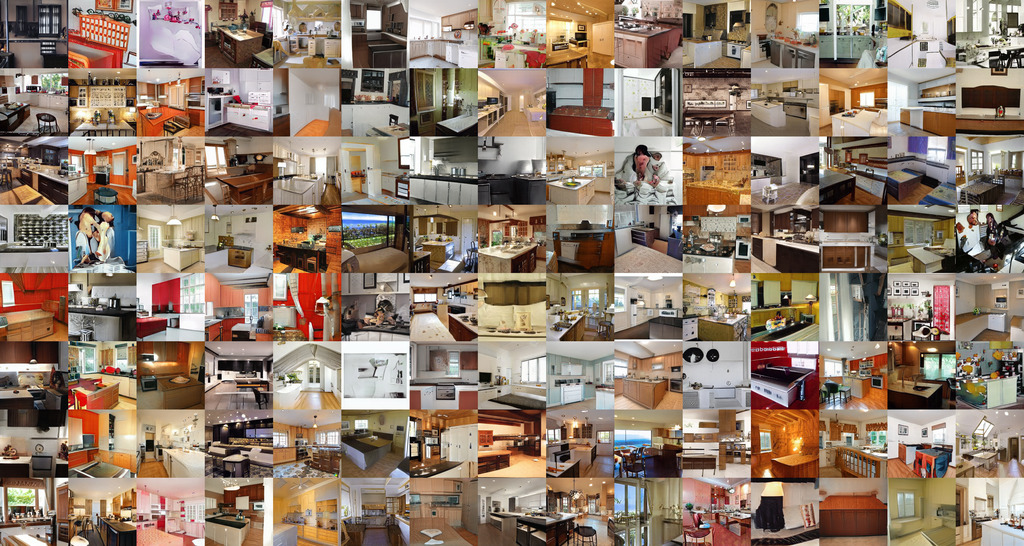

When training StyleGAN, each step of the training process produces a grid of images based on the same random seed. This is an easy way to visualize the results of the training. What I was most surprised by is that after just one step, these images looked like the rooms they were meant to be replicating.

Grid of dining room images after one training loop.

Grid of dining room images after one training loop.

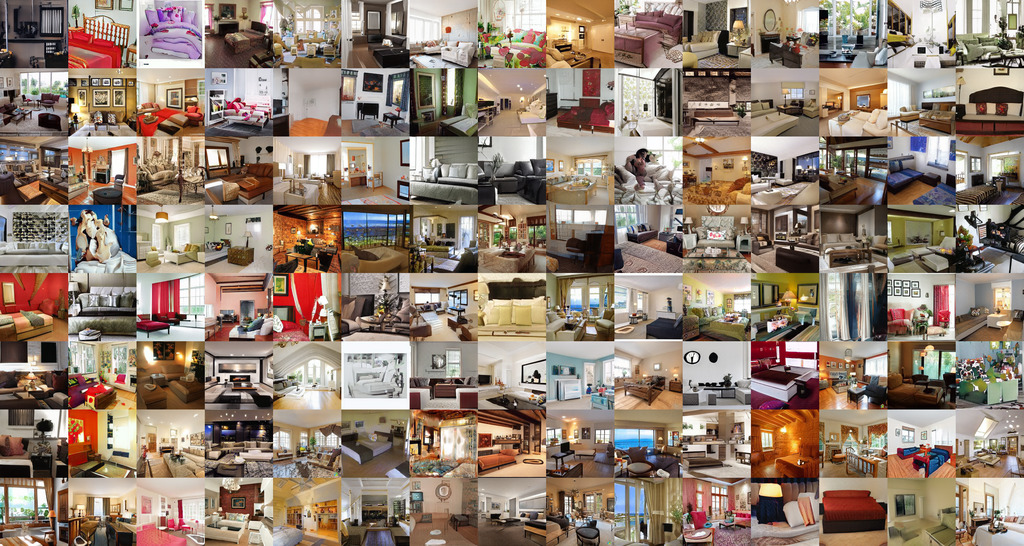

While continued training seems to allow these images to continue to improve – taking a long couch which started its life as a bed and converting it to a more reasonable looking couch, for example – overall, it is consistent that the training process learns the vast majority of the changes in room type very quickly.

Grid of kitchen images after one training loop.

Grid of kitchen images after one training loop.

(If it feels like I’m harping on this point, you’re not wrong: I’m continually amazed by this!)

Grid of living room images after one training loop.

Grid of living room images after one training loop.

Originally, I was scared to use transfer learning for this task. I was worried there was too little similarity in kitchens compared to bedrooms, so I’d never see the model able ot climb out of that rut, instead simply creating ugly bedrooms in place of kitchens. This effort has convinced me that this was entirely misplaced on my part.

To give a better sense of the training process, I’ve prepped a set of samples: Each shows the initial model (trained on bedrooms), and how it progressed over the course of ~2 days of training on a GTX 1080. The first step took about 1.5 hours of GTX 1080 training time. You can browse the samples on the Transfer Learning Demo Site.

The most interesting thing to me here is that once someone has trained a similar model, it becomes very straightforward for many consumers to create models which can act as a novel GAN. This allows sites like This Waifu Does Not Exist to be created quickly.

With this in mind, the only question is: where do we go next?